Blog...

A blog post is loading...

Jan 1, 2025

Loading…

A blog post is loading...

Jan 1, 2025

Loading…

A data-backed look at how Max explores v0 and what it teaches me about personal software.

Feb 2, 2026

In early December, my son Max asked me to help him build something on his iPad.

In the old world, I would have opened Scratch, explained loops and variables, and watched the energy drain out of his face halfway through. That’s not a knock on Scratch — it’s just not how kids want to create when the interface is talking.

So instead, we opened v0 (pronounced “v‑zero”), an AI app builder from Vercel. You describe an app in natural language, it generates code, and you get a live preview you can run and share.

This is the broader shift happening in software too. A lot of engineers are moving to agent-first tools like Claude Code and OpenAI’s Codex. I wrote a longer version of that shift in Coding in 2025: the interface stops being “type code” and becomes “tell an agent what you want.”

I wanted to see what happens when you give that interface to a seven‑year‑old.

Before I tell stories, here’s the denominator.

In this window (December 1, 2025 → February 2, 2026), there were 109 chats on this account. Of those, 106 chats generated at least one version (a runnable preview).

So this isn’t a few cute projects. It’s a real build portfolio: lots of starts, a handful of obsessions, and repeated iteration loops.

He didn’t really need help. He typed. He spoke. He asked for what he wanted. When something broke, he kept going until it worked.

And he burned through credits fast — the free tier in a couple days, then my pro credits a few days after that. When his mom said I was allowed to buy him credits because “it’s educational,” I realized we were in a new era of parenting.

After about a month, I had a question I couldn’t shake:

What does learning look like when the interface is an agent, not a programming language?

So I exported the data and treated it like a logbook.

I exported chats, messages, and versions from v0, then fed the raw JSON into a coding agent directly to compute stats and help me sift the logbook.

Because this v0 account includes both his prompts and some of my early testing prompts, I tried to separate the signal from the noise:

I use two lenses in the analysis:

Two quick definitions:

This is the compact behavior view before we go project-by-project.

Prompts per Week (Dec 2025 → Feb 2026)

700 text

~1.13/prompt

418 prompts

Busiest week: Dec 8, 2025 ( 177 prompts)

What the scoreboard says:

So the behavior is: start fast, kill fast, then double down hard on the few that matter.

This is what that build portfolio looks like in practice.

Showing the top 15 chats by prompt count, captured as square screenshots from each project’s latest v0 demo preview.

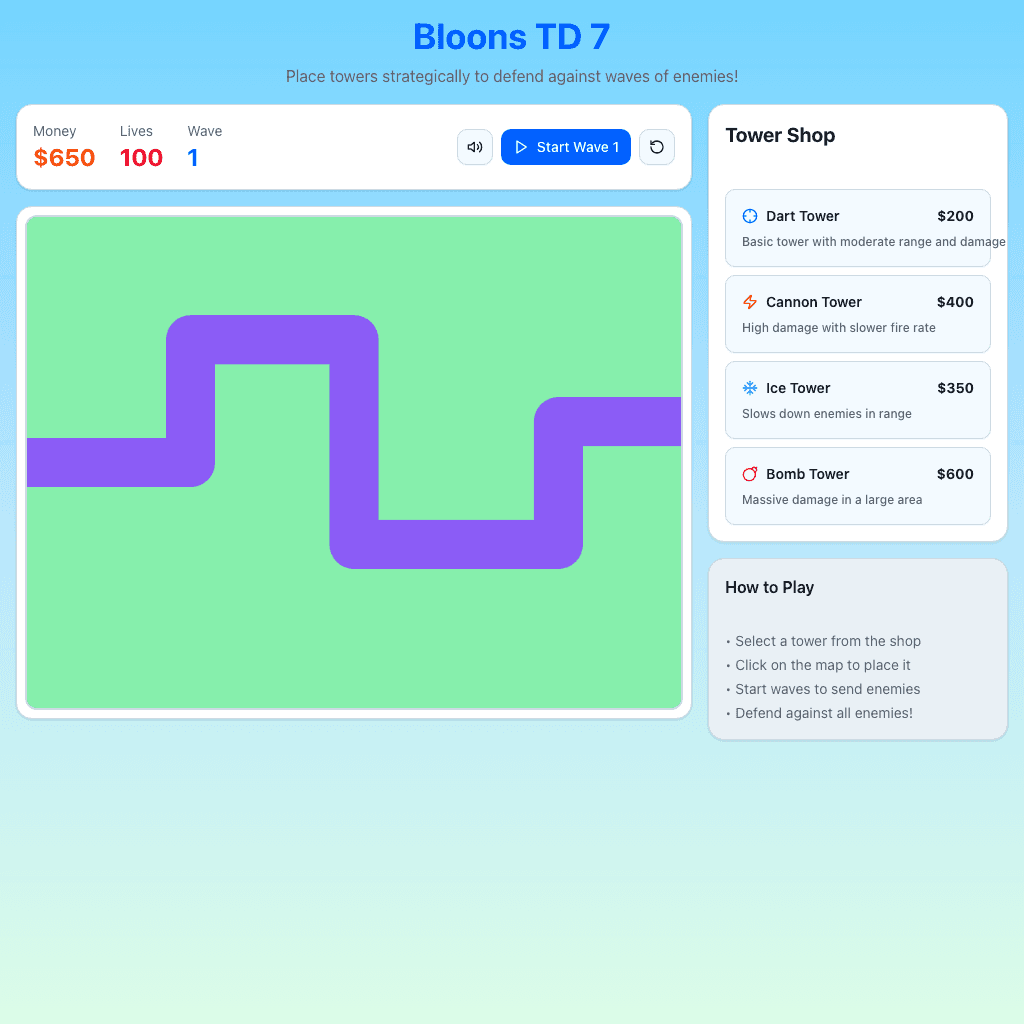

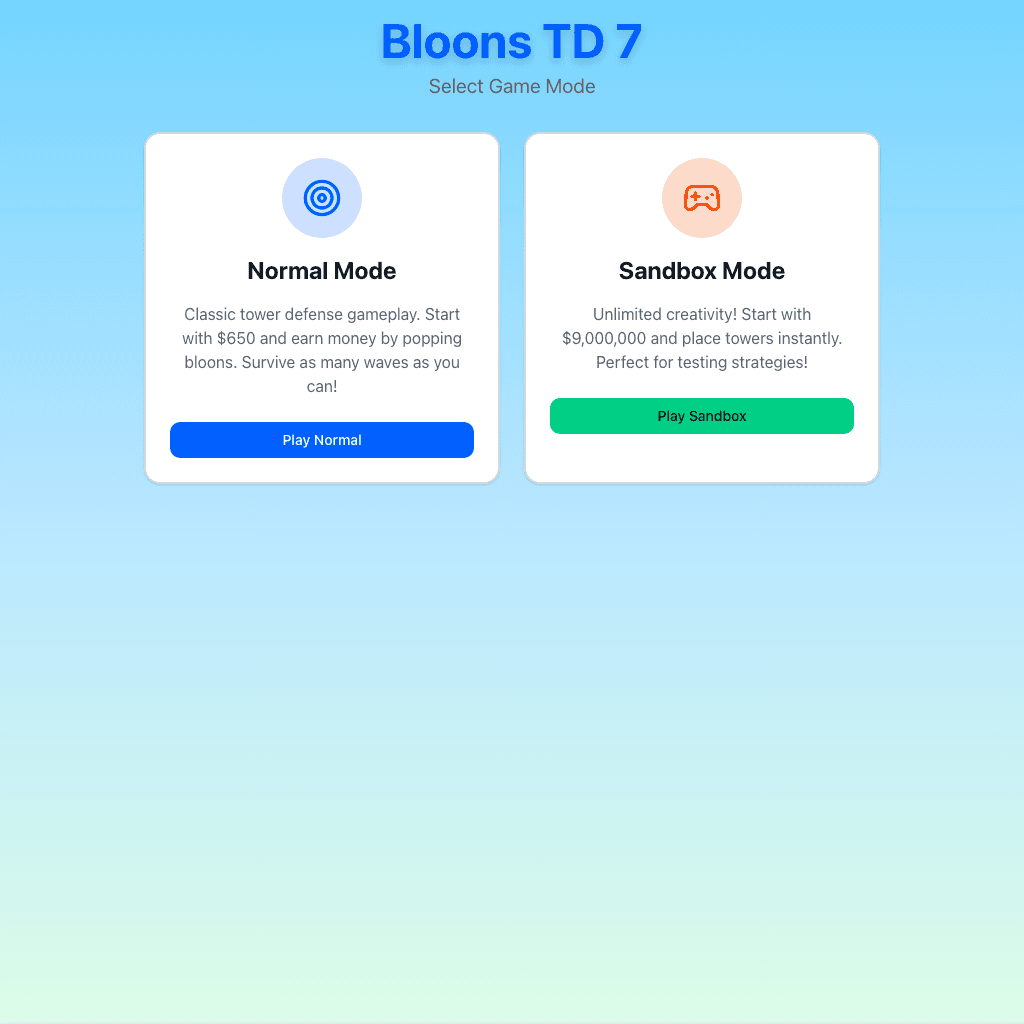

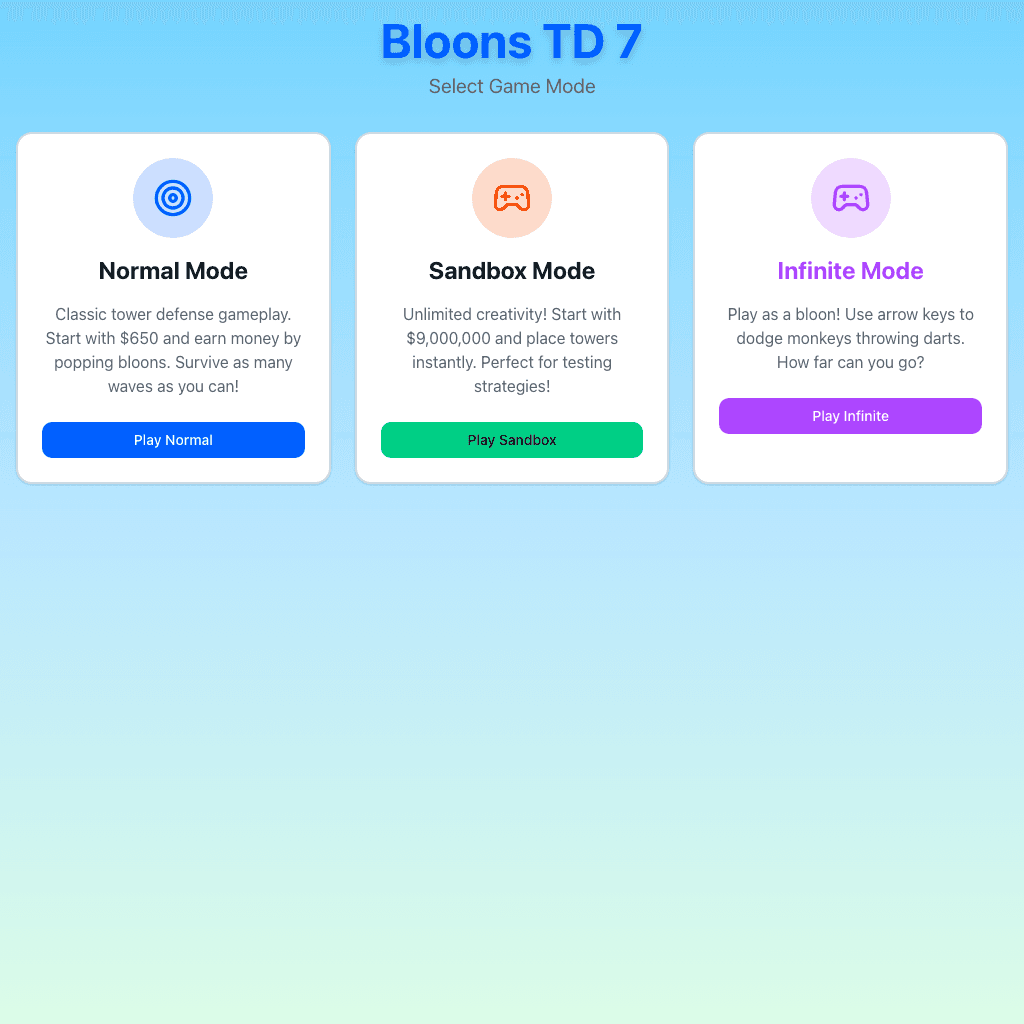

1. Bloons TD 7 App (84 prompts)

1. Bloons TD 7 App (84 prompts) 2. Vercel AI app (54 prompts)

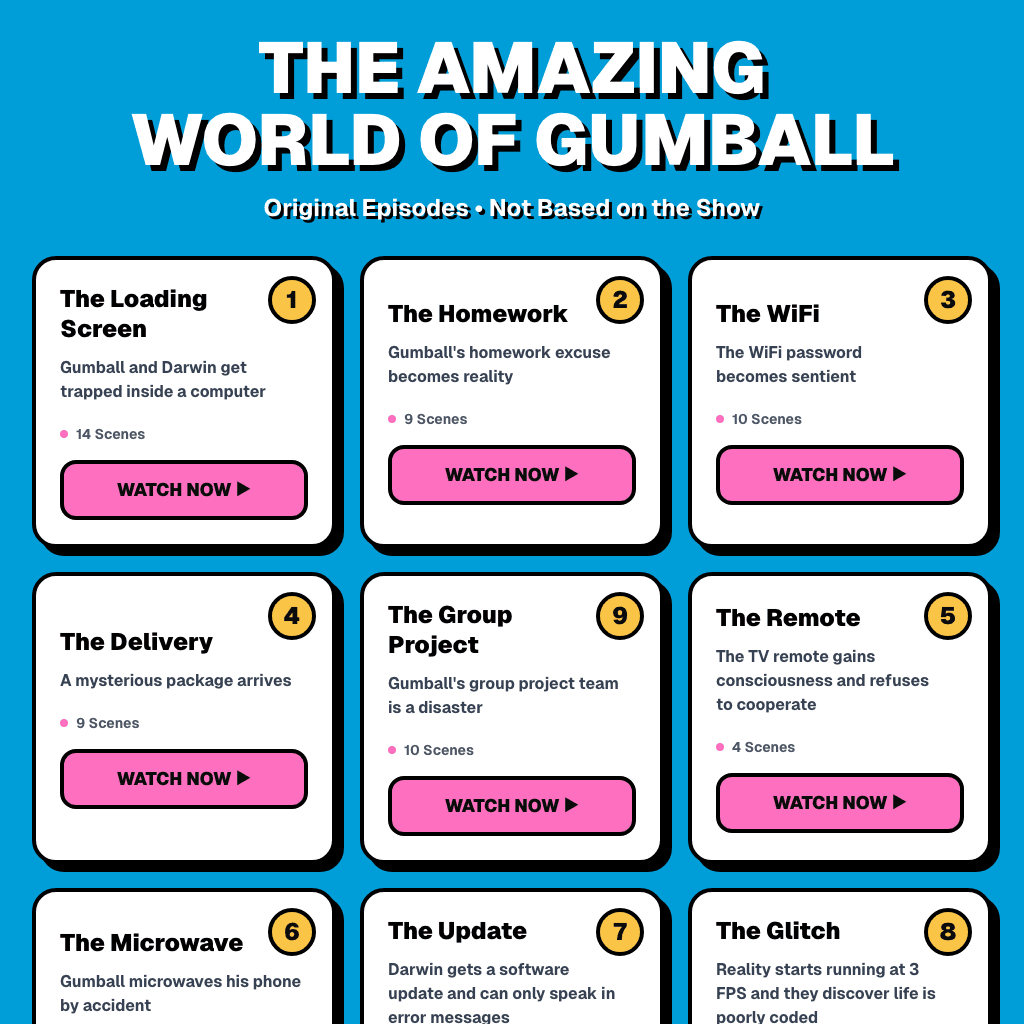

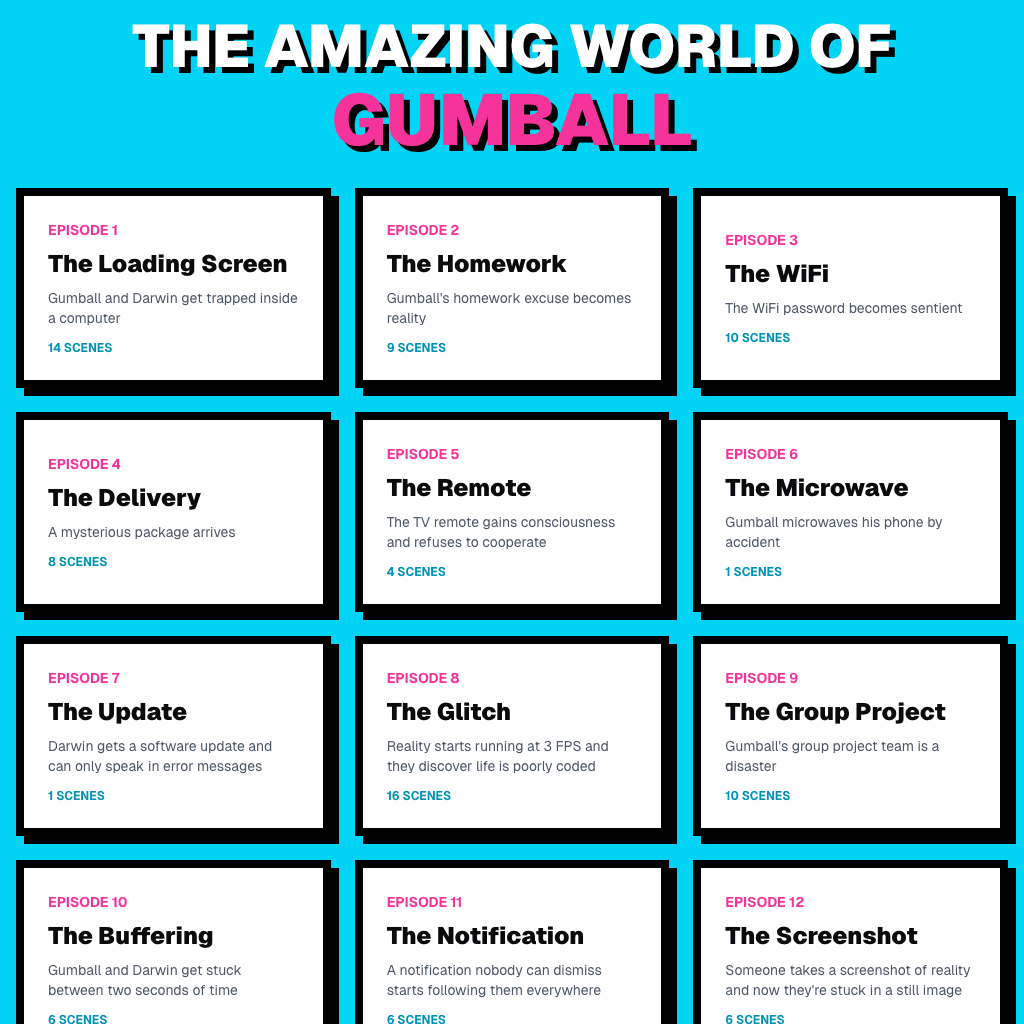

2. Vercel AI app (54 prompts) 3. Gumball video (42 prompts)

3. Gumball video (42 prompts) 4. AI assistant (25 prompts)

4. AI assistant (25 prompts) 5. Bloons TD eight (25 prompts)

5. Bloons TD eight (25 prompts) 6. Bloons TD game (20 prompts)

6. Bloons TD game (20 prompts) 7. Zelda 3D game (19 prompts)

7. Zelda 3D game (19 prompts) 8. Bloons TD game (13 prompts)

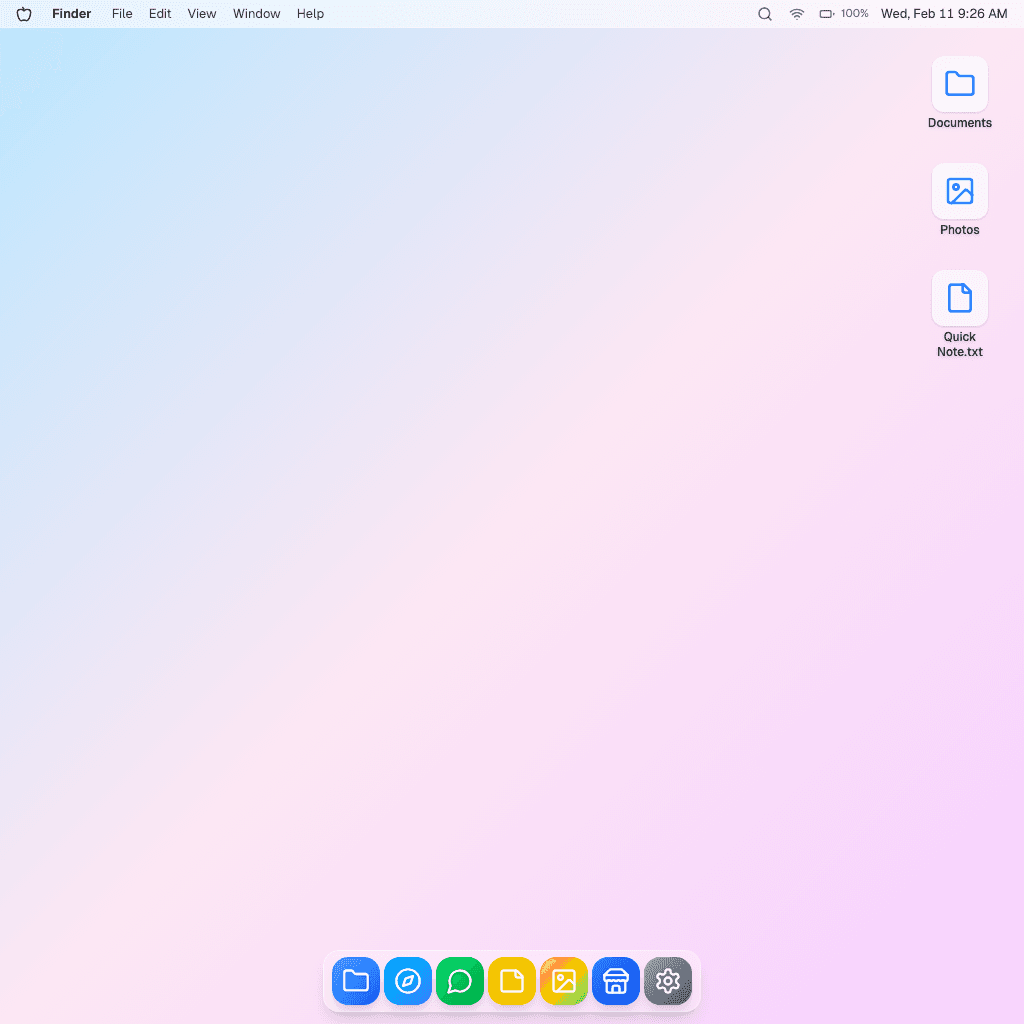

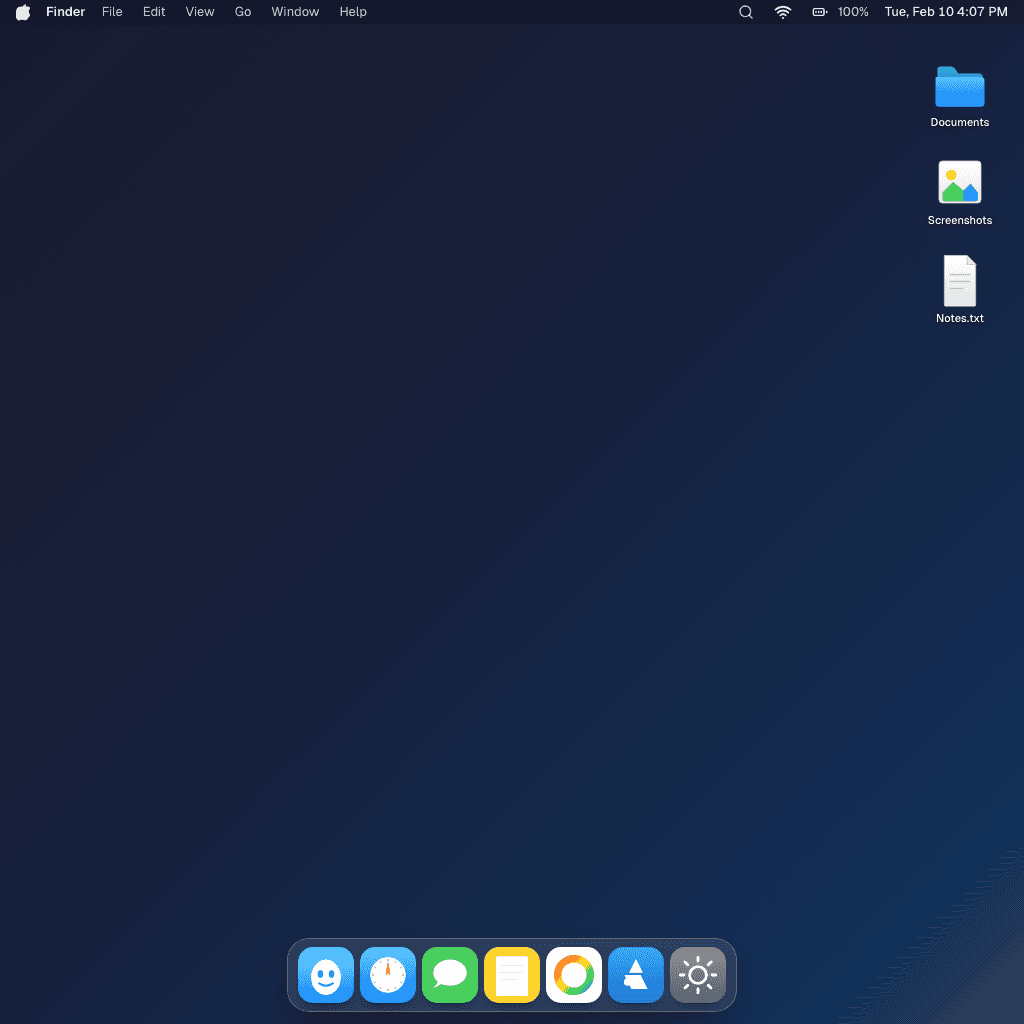

8. Bloons TD game (13 prompts) 9. Mac on iPad (13 prompts)

9. Mac on iPad (13 prompts) 10. Christmas 3D app (12 prompts)

10. Christmas 3D app (12 prompts) 11. Private YouTube app (12 prompts)

11. Private YouTube app (12 prompts) 12. Meme app (11 prompts)

12. Meme app (11 prompts) 13. Watch app (11 prompts)

13. Watch app (11 prompts) 14. World generation (11 prompts)

14. World generation (11 prompts) 15. Interactive word player (10 prompts)

15. Interactive word player (10 prompts)These were the patterns that only became obvious after reading the full logbook.

Almost half his prompts are 10 words or fewer. He doesn’t explain; he issues directives:

It’s the language of someone who assumes the computer will understand intent.

61% (385/629)

Excluding the pasted error boxes, 385 of 629 prompts start with “make…”. He’s not describing implementation; he’s describing outcomes.

In this window, 71 times he pasted an error box (“the code returns the following error…”) and asked the system to fix it.

That’s not “I’m stuck.” That’s “next step.”

The headline: most of the time he’s just building. But roughly 1 in 4 text prompts are “friction” — debugging, getting the look right, making it work on mobile, or trying to publish.

That friction tax is where the most interesting product questions show up: memory, taste, and shipping.

He keeps having to repeat constraints that feel obvious:

I HAVE TO TELL YOU THIS EVERY TIME MAKE IT MOBILE

Across the window, he explicitly asked for mobile/iPad support 25 times. A new chat feels like starting over.

He’ll iterate on mechanics all day. What he hates is when the output looks wrong.

Across the logbook, he had 61 prompts that were explicitly about how things look (images, graphics, style) — almost as many as the 71 pasted error-box prompts.

The Gumball project below is the clearest example of this: once mechanics are close, taste is the real bottleneck.

The AI Buddy project is where the future shows up… until it needs a link:

AI Buddy (54 prompts). The future… until you need a URL.

AI Buddy (54 prompts). The future… until you need a URL.

If you can’t ship, the toy doesn’t feel real.

And then the most honest version of this desire:

MAKE IT LIVE!!!!!

I reread every user + assistant turn across one early project, the three core projects, and one late project. The same payoff loop keeps showing up: ask for a world, hit a break, expand scope, run into realism walls, keep iterating anyway.

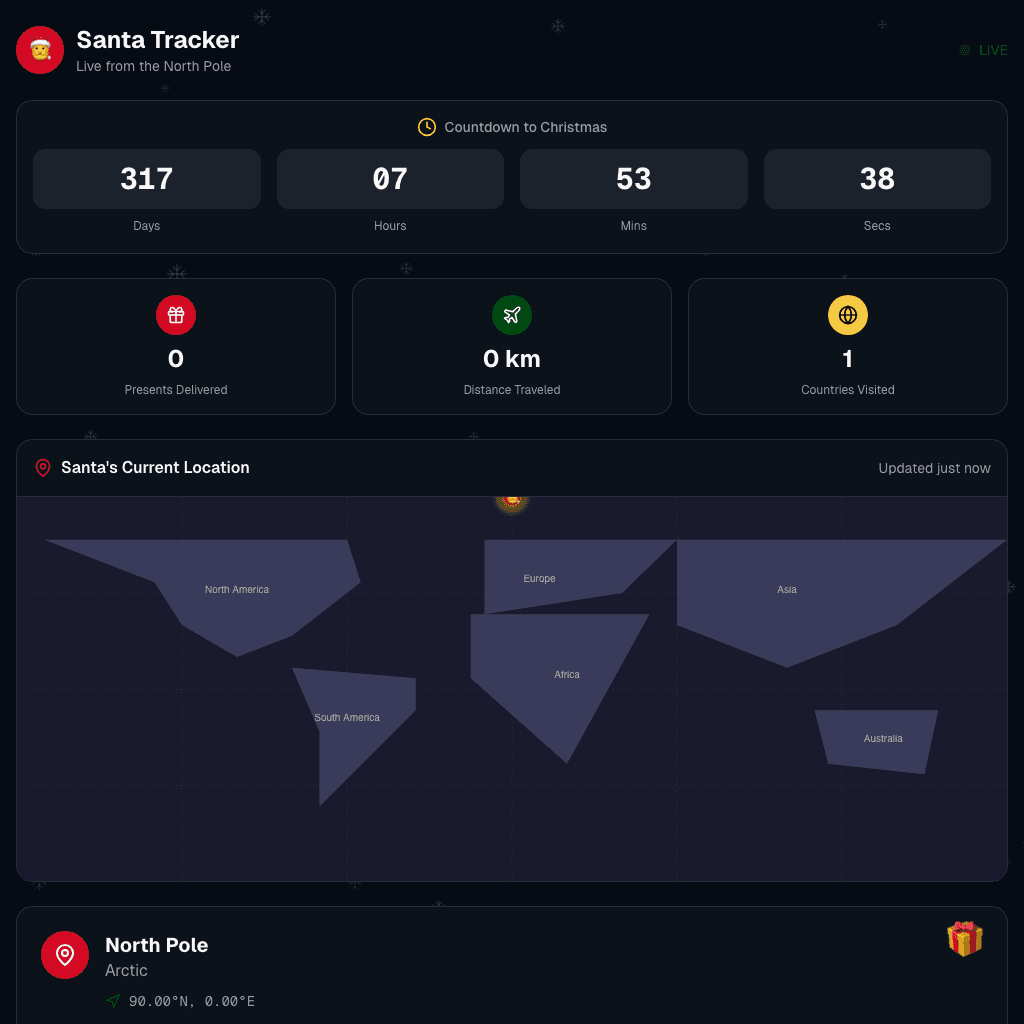

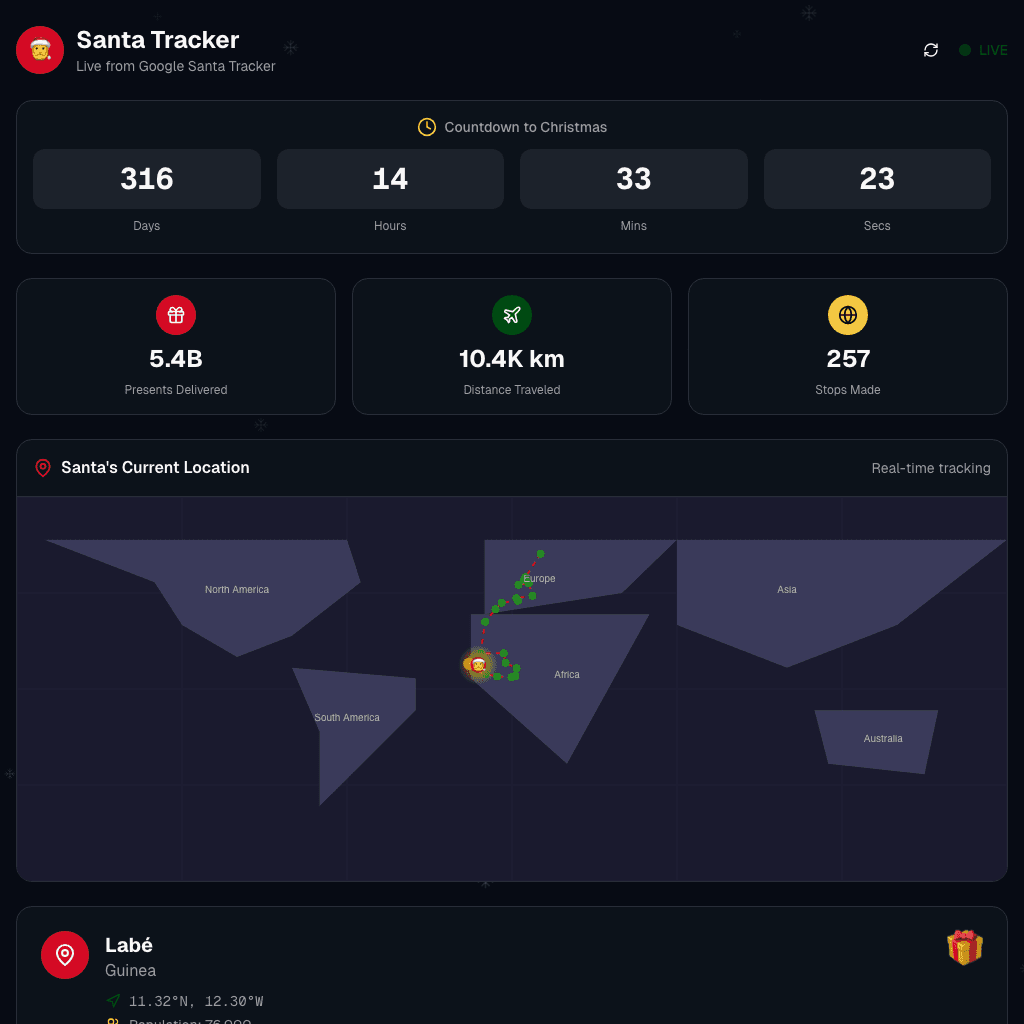

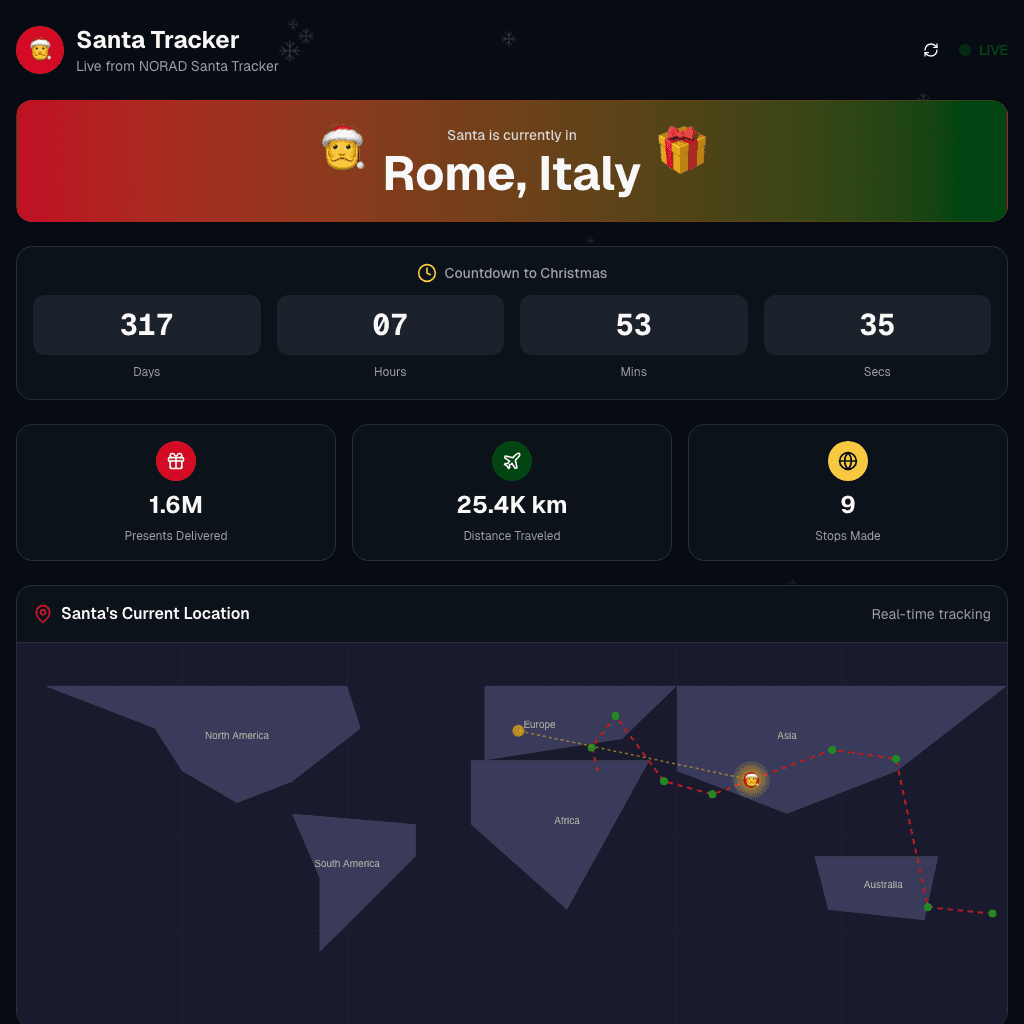

Early prompt focus: make a real Santa tracker with live location. Middle prompt focus: fix broken movement and frozen stop counts. Late prompt focus: wire NORAD data and keep Santa moving.

Even after errors and data failures, the ending ask stays simple: make it actually tell us where Santa is.

Early prompt focus: core loop fixes (tower attacks + pathing). Middle prompt focus: modes, maps, and upgrade systems. Late prompt focus: balancing, regression fixes, and replayability.

This is the clearest long-run product loop in the logbook: big scope growth, constant break-fix cycles, and repeated returns over multiple days.

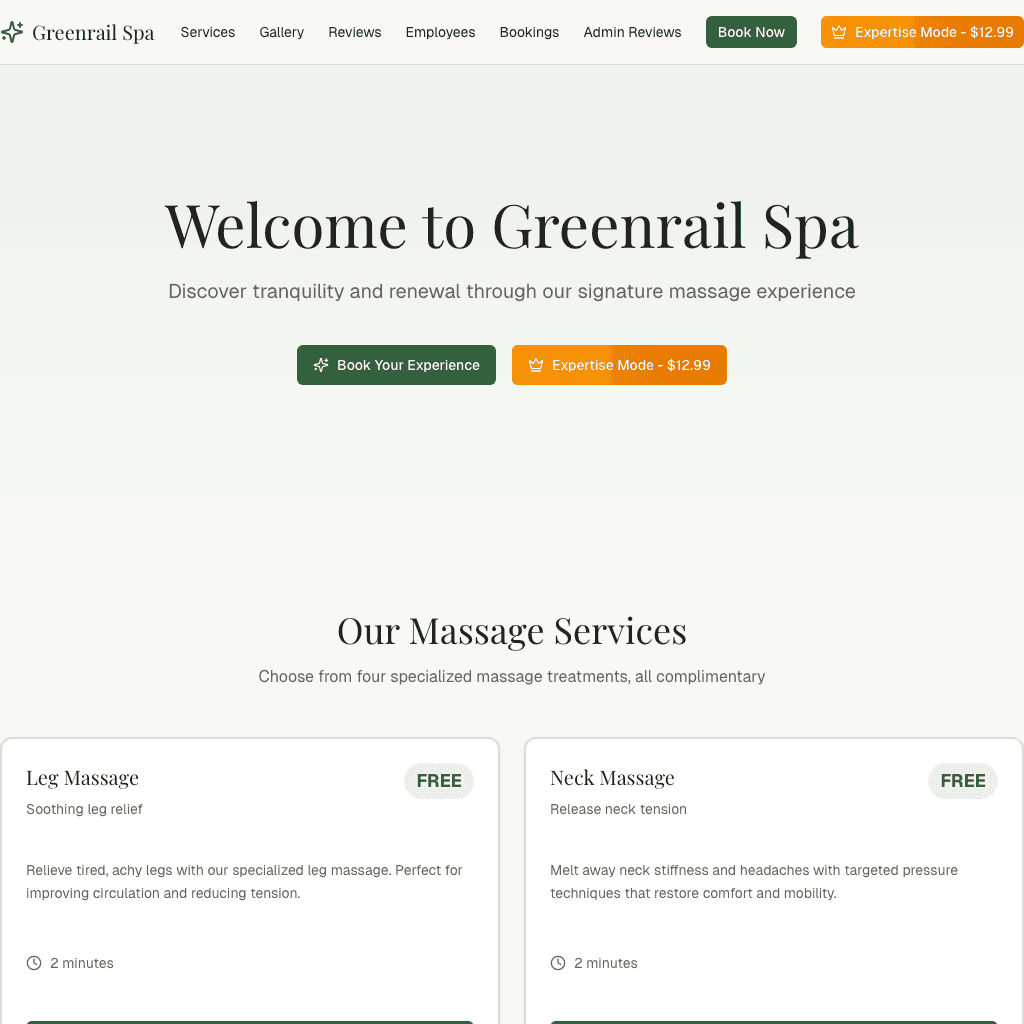

Early prompt focus: stand up a free spa website quickly. Middle prompt focus: booking flow, admin login, and gallery updates. Late prompt focus: persistence, notifications, and operations.

This arc moves from pretend-play into real product behavior fast, and then stalls on reliability details that make software feel truly usable.

Early prompt focus: get a Gumball-style story started. Middle prompt focus: character fidelity and image-per-scene layout. Late prompt focus: expand episodes while keeping style consistency.

This is the strongest evidence that taste alignment is a first-order problem, not just polish after the build “works.”

Early prompt focus: build the Mac-on-iPad world shell. Middle prompt focus: App Store, Safari, and downloads. Late prompt focus: persistence, media behavior, and desktop polish.

This is the most compressed ambition arc in the dataset: 65 versions from 13 prompts, with platform-level asks appearing almost immediately.

Across these five arcs, the progression is consistent: the ask starts broad, the friction appears quickly, the scope keeps expanding, and completion matters less than continued iteration.

Max isn’t “learning to code” in the way I learned to code.

He’s learning something else: how to get a computer to produce a specific experience, and how to iterate until it matches the thing in his head.

That’s personal software in its purest form: you want a thing to exist, so you make it, then you live inside it.

I’ve been calling this broader shape the Disposable Intelligence Era: the cost of creating (and discarding) software is collapsing, so the scarce part becomes taste, direction, and iteration.

Max isn’t learning React. He’s learning the loop: imagine → specify → judge → iterate → ship.

When these tools start remembering context (mobile, style, preferences) across chats, the real unlock isn’t “write code faster.” It’s that a kid can grow a persistent world over weeks — and actually live inside it.